Fine-tuning is the essential process of adapting pre-trained AI models to excel in specialized domains. This guide breaks down the mechanics of model adaptation, identifies high-impact use cases, and outlines the blueprint for data preparation. Whether you are exploring the concept or ready to deploy, FPT AI Factory offers understanding in “what is fine-tuning” and provides the infrastructure to power your transition from general AI to domain expertise.

1. What is Fine-Tuning?

Fine-tuning is the process of taking a pre-trained foundation model, one already trained on vast amounts of general data, and continuing to train it on a smaller, task-specific dataset. Rather than starting from zero, you’re starting from a model that already understands language, context, and structure. You’re simply teaching it to apply that knowledge in a more focused way.

Fine-tuning is widely considered a subset of transfer learning: the practice of leveraging knowledge a model has already acquired as the foundation for learning new tasks. This is especially valuable for deep learning models with billions of parameters, such as large language models (LLMs) used in natural language processing (NLP), or vision models like CNNs and Vision Transformers (ViTs) used for image classification and object detection.

In practical terms, fine-tuning can be used to adjust the conversational tone of a customer-facing chatbot, inject proprietary business knowledge into a model, or adapt a general-purpose LLM for highly specialized fields like law, medicine, or finance, all without the cost of building a new model from the ground up.

Fine-tuning is the process of further training a pre-trained model to adapt it to specific tasks (Source: FPT AI Factory)

2. When to Use Fine-Tuning?

Fine-tuning gives a model a sharper, more focused lens: feeding it industry-specific terminology, task-oriented interactions, or proprietary knowledge that a general-purpose model simply wasn’t built to handle.

- Task-specific adaptation: When you need a model to excel at a narrow, well-defined task, such as sentiment analysis, contract review, code generation, or domain-specific Q&A, fine-tuning is the most direct path. While prompt engineering and embeddings can help, they often fall short in complex or high-stakes scenarios. Fine-tuning goes deeper by reshaping the model itself, leading to more consistent and higher-accuracy outputs.

- Data security and compliance matter: In regulated industries like healthcare, finance, or legal, sensitive data can’t be routed through third-party APIs. Fine-tuning locally on your own secure infrastructure keeps everything in your controlled environment.

- Bias mitigation: Pre-trained models inherit biases from the data they were built on. Fine-tuning on a carefully curated, balanced dataset lets you actively correct for those biases, especially important for customer-facing applications where trust is on the line.

- Tone and style control: Fine-tuning enables the model to consistently follow a specific voice, whether it’s formal, conversational, or aligned with a brand identity, something prompt engineering alone often struggles to maintain.

- Your business keeps evolving: When products launch, regulations change or customer behavior shifts, fine-tuning supports continuous learning, allowing you to periodically refresh the model without rebuilding from zero. It’s how you keep AI aligned with where your business actually is.

- Need real industry depth: Some domains demand more than general intelligence. They require specialized expertise embedded in the model itself.

- In healthcare, fine-tuning on clinical data improves diagnostic support.

- In finance, fine-tuned models detect fraud patterns that a generic model would simply miss.

When the stakes are high, the difference between a general answer and a domain-trained one is the difference between useful and unreliable.

Use fine-tuning for specialized tasks, better accuracy, and control over data and output style.

>> Explore: Transfer Learning vs. Fine Tuning: A Comprehensive Guide

3. How does Fine-Tuning work?

Fine-tuning works by taking a pre-trained model and training it further on a smaller dataset that is relevant to a specific task. Instead of building a model from scratch, this process adapts an existing model so it performs better in a defined business or domain context. A typical fine-tuning workflow includes the following steps:

3.1. Step 1: Select a base model

The process starts with a pre-trained model that already understands general language patterns. This base model provides the foundation, so teams only need to adapt it for a narrower use case.

3.2. Step 2: Prepare task-specific data

Next, teams collect and organize the data used for fine-tuning. Depending on the objective, this may include question-answer pairs, classification labels, instruction-response examples, or domain-specific documents. The quality of this dataset has a direct impact on the final model performance.

3.3. Step 3: Train the model on the new dataset

The model is then trained further on the prepared dataset. During this stage, its weights are updated so it becomes better at handling the target task, following the desired format, or responding with more domain-relevant outputs.

3.4. Step 4: Evaluate the results

After training, the model is tested to measure whether it performs better than the original version. Teams typically evaluate accuracy, relevance, consistency, or task completion quality, depending on the use case.

3.5. Step 5: Deploy and improve over time

Once the fine-tuned model meets the required standard, it can be deployed into real applications. From there, teams can continue monitoring outputs, collecting feedback, and retraining when new data or new requirements appear.

Depending on cost, model size, and performance goals, organizations may use either full fine-tuning or more efficient methods such as LoRA and other parameter-efficient fine-tuning (PEFT) approaches.

Use the updated dataset to train and refine the model for better results.

>> Explore: A Step-by-Step Guide to Fine-Tuning Models with FPT AI Factory and NVIDIA NeMo

4. Steps to prepare data for Fine-Tuning

4.1. Step 1: Convert Raw Data

Raw data comes in all shapes and sizes: PDFs, spreadsheets, logs, chat transcripts, and more. This step is about transforming that unstructured mess into clean, model-readable inputs. Think of it as translating your data into a language the AI can actually learn from.

4.2. Step 2: Standardize Data Format

Consistency is the foundation of good training. Once converted, all data must follow a unified structure, same fields, same syntax, same formatting rules. Inconsistencies at this stage can confuse the model and quietly hurt its performance later.

4.3. Step 3: Organize the Dataset

Not all data plays the same role. This step involves splitting your dataset into three distinct groups: training data (what the model learns from), validation data (used to monitor progress), and test data (used to measure final performance). A well-organized dataset makes evaluation meaningful and results trustworthy.

4.4. Step 4: Balance Domain & General Data

Specialization is the goal, but over-specialization is a trap. If your dataset is 100% domain-specific, the model may lose its general reasoning ability. Striking the right balance between domain knowledge and general language understanding keeps the model both accurate and adaptable.

Pro tip: Skipping or rushing these steps is the most common reason fine-tuned models underperform. Invest time here, and the training process becomes significantly smoother.

Ready to put your data to work? Data preparation is only the beginning. The real power lies in what comes next and that’s where Model Fine-Tuning takes over. Built for enterprise scale with no-code simplicity, multi-GPU performance, and fully isolated secure environments, it gives your organization everything needed to go from prepared data to production-ready AI without compromise.

Get started with $100 in free credits and explore the FPT AI Factory ecosystem. For 30 days, unlock the full power of the FPT AI Factory ecosystem: GPU containers built for scale, AI Notebooks for rapid experimentation, AI Studio for end-to-end fine-tuning workflows, and a growing library of 20+ industry-leading models — including Fine Tuning Llama-3.3.

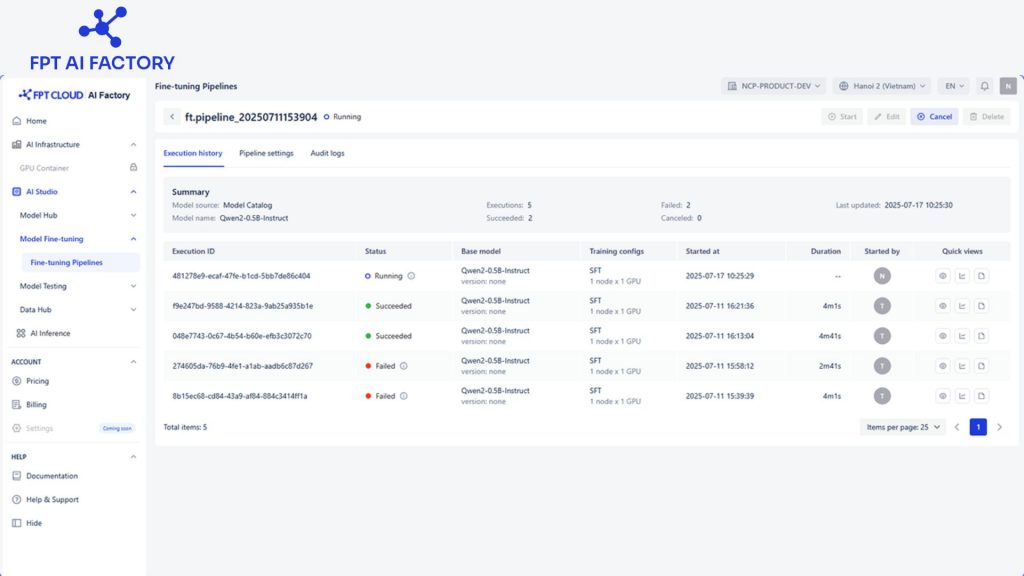

Model Fine-Tuning interface (Source: FPT AI Factory)

5. FAQs

5.1. How do you prepare data for fine-tuning?

Data preparation is the most critical and most underestimated step in fine-tuning. It involves four key stages: converting raw data into a structured, model-readable format; standardizing that data so every entry follows consistent rules; organizing it into training, validation, and test splits; and finally, balancing domain-specific content with general data to keep the model both specialized and adaptable. Skipping or rushing any of these steps is the fastest way to undermine an otherwise well-designed model.

5.2. What is an example of fine-tuning an LLM?

Consider a general-purpose language model being fine-tuned for legal document summarization. The base model already understands grammar, context, and reasoning but it doesn’t know how to handle legal terminology, clause structures, or jurisdiction-specific nuances. By fine-tuning it on a curated dataset of legal contracts and their summaries, the model learns to produce accurate, concise, and contextually appropriate summaries tailored specifically for legal professionals.

5.3. What is the difference between fine-tuning and transfer learning?

Transfer learning is the broader concept, it refers to taking knowledge learned from one task and applying it to another. Fine-tuning is a specific type of transfer learning where a pre-trained model’s weights are further adjusted on a new, targeted dataset. In short, all fine-tuning is transfer learning, but not all transfer learning involves fine-tuning. The key distinction is that fine-tuning actively updates the model’s parameters, while other transfer learning approaches, such as feature extraction, keep the original weights frozen entirely.

The difference between AI that’s good enough and AI that’s built for you starts with the right platform. With Model Fine-Tuning, you can transform raw data into a production-ready model through a streamlined process that combines no-code simplicity, enterprise-grade security, and flexible pricing that scales with your needs. It empowers businesses to build AI solutions tailored to their specific use cases quickly and efficiently.

Beyond fine-tuning, FPT AI Factory offers a comprehensive ecosystem of AI services, including scalable GPU Virtual Machine, AI Notebooks for experimentation, AI Studio for end-to-end development, and Serverless Inference. This all-in-one platform supports the entire AI lifecycle — from data preparation and training to deployment and optimization.

Individuals will receive $100 upon registration, which are available for immediate use right after logging in. For businesses with more advanced needs, such as customized solutions or large-scale deployments, please reache out through FPT AI Factory contact form. FPT team will provide tailored consultation and dedicated support to meet your specific requirements. If your enterprise is ready to move beyond generic AI and build solutions designed specifically for your business, now is the time to take the next step. Let’s contact FPT AI Factory now!

Contact information:

Hotline: 1900 638 399

Email: support@fptcloud.com

Explore related article:

Prompt Engineering vs Fine-tuning: A Guide to better LLM

Transfer Learning vs. Fine Tuning: A Comprehensive Guide

What Is Supervised Fine-Tuning? Process, Use Cases, Benefits