What is LLM inference and why is it the defining factor in delivering fast, responsive AI applications to your end-users? While training builds a model’s foundational knowledge, demanding highly optimized infrastructure to minimize latency and control operational costs. At FPT AI Factory, we provide the robust, scalable computing resources necessary to accelerate your inference workloads and bring enterprise-grade generative AI solutions to market seamlessly.

1. What is LLM Inference?

LLM inference is the process of using a trained large language model (LLM) to generate outputs, such as text, answers, or predictions, based on new user inputs. Instead of learning or updating weights like in training, inference is a forward-only process where the model takes input, processes it through its layers, and produces results in real time.

To understand this better, LLM inference is a specific case of AI inference. In general, AI inference refers to the stage where a trained model is applied to real-world data to make predictions, classifications, or decisions. This is the phase where AI actually creates value, powering applications like chatbots, recommendation systems, fraud detection, and more.

As AI systems move from experimentation to production, inference becomes the most important and resource-intensive stage. It runs continuously, directly impacting latency, scalability, and cost. In many real-world deployments, inference accounts for the majority of operational expenses over a model’s lifecycle. This is where AI inference infrastructure comes in.

2. How does LLM Inference work?

LLM inference follows a structured pipeline where input text is transformed step by step into meaningful output. Below is a simplified breakdown of how modern large language models generate responses in real time.

Step 1: Tokenisation

The first step is converting raw input text into smaller units called tokens. These tokens can be words, subwords, or even characters, depending on the tokenizer used.

Each token is then mapped to a numerical ID so the model can process it mathematically. This transformation is essential because neural networks cannot directly understand raw text. Tokenisation also determines how efficiently the model handles language and context.

LLM inference follows a structured pipeline where input text is transformed step by step (Source: FPT AI Factory)

Step 2: Embedding lookup

Once tokenized, each token ID is converted into a vector representation using an embedding table. These embeddings capture semantic meaning, allowing the model to understand relationships between words. At this stage, tokens are no longer just symbols, they become points in a high-dimensional space. Similar words or concepts are placed closer together, helping the model reason about context and meaning.

Step 3: Forward pass through transformer layers

The embeddings are then passed through multiple transformer layers, where the model processes context using self-attention mechanisms. Each token “attends” to other tokens to understand relationships within the input. This forward pass involves complex computations across many layers, refining the representation of each token. By the end of this stage, the model has built a deep understanding of the input sequence and its context.

Step 4: Sampling/decoding

After processing, the model outputs a probability distribution over possible next tokens. Decoding strategies, such as greedy decoding, top-k, or nucleus sampling, are used to select the next token. This step determines how the model generates text, balancing between accuracy and creativity. Different decoding methods can significantly impact the style and quality of the output.

Step 5: Autoregressive generation

LLMs generate text in an autoregressive manner, meaning each new token is predicted based on all previously generated tokens. This process repeats step by step until the response is complete. Because each token depends on prior context, inference is inherently sequential. This makes performance, latency, and memory optimization critical for real-world applications.

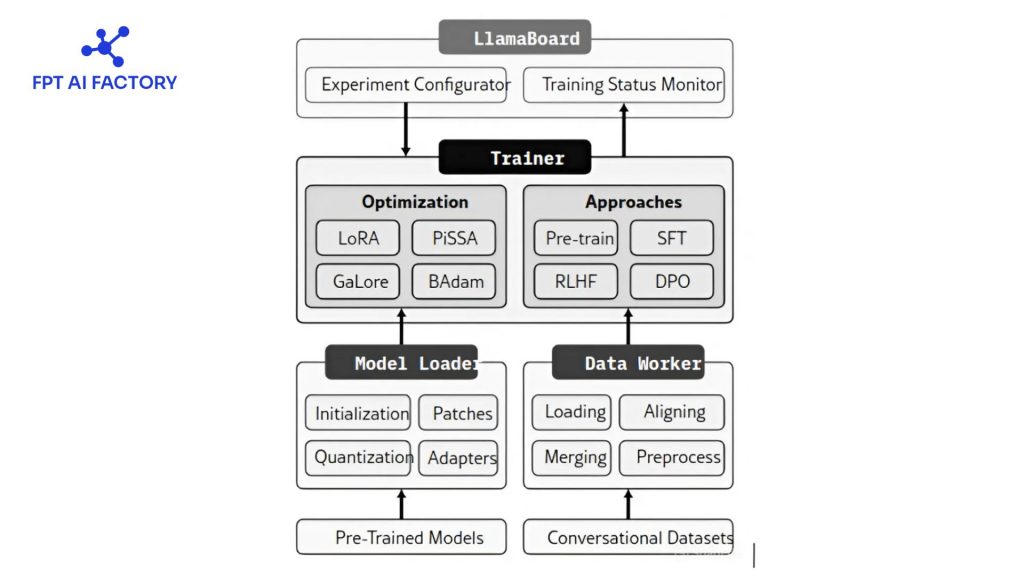

As LLM inference moves from experimentation to production, managing infrastructure (GPU provisioning, scaling, APIs) becomes a major challenge for most teams. This is where serverless inference plays an important role, allowing developers to run AI models on demand without handling servers or complex deployment pipelines.

One example is FPT AI Factory Serverless Inference, a service that enables businesses to deploy and use AI models via API in a fully managed environment. Instead of building and maintaining infrastructure, users can directly integrate pre-trained models into their applications with minimal setup.

Key benefits include:

- No infrastructure management: No need to provision GPUs, manage servers, or maintain systems, everything is handled by the platform.

- Pay-as-you-go cost efficiency: Only pay for actual usage, avoiding idle resource costs

- Automatic scaling: The system dynamically scales based on demand, ensuring stable performance even with fluctuating workloads

- Fast integration via API: Easily connect AI models to applications in hours instead of days

LLMs generate text in an autoregressive manner (Source: FPT AI Factory)

3. Key Metrics for LLM Inference Performance

To effectively evaluate the performance of Large Language Model (LLM) inference systems, relying on a single metric is not sufficient. Instead, multiple key metrics must be considered to capture user experience, system throughput, and infrastructure efficiency. The following metrics are commonly used in practice to provide a comprehensive view of LLM inference performance:

| Metric | Description | How to Interpret It |

| Time to First Token (TTFT) | The time between sending a request and receiving the first generated token. It reflects the initial response delay of the system. | A lower TTFT means the system feels more responsive to users. A higher TTFT often makes the system appear slow, even if the rest of the response is generated quickly. |

| Tokens per second (TPS) | The number of tokens generated per second measures system throughput under load. | A higher TPS indicates that the system can handle more data efficiently. If TPS decreases as traffic increases, it usually means the system is reaching its resource limits. |

| Latency per token | The average time required to generate each token after the first one is also known as inter-token latency or TPOT. | Lower latency per token results in smoother and more real-time output. Higher latency can make responses feel delayed or less fluid. |

| Concurrency/batch size | The number of requests processed at the same time or grouped in a single inference batch. | Increasing concurrency or batch size can improve efficiency and reduce cost per request. However, if set too high, it may lead to longer waiting times and slower initial responses. |

| GPU utilisation | The level of GPU resource usage during inference, including compute, memory, and bandwidth. | High and stable GPU utilisation suggests efficient use of hardware. Low utilisation indicates wasted resources, while consistently maximum utilisation may lead to bottlenecks and increased latency. |

4. Solutions to optimize LLM Inference

Instead of scaling hardware indefinitely, modern approaches focus on improving how models are executed, scheduled, and compressed. The following techniques represent the most effective strategies widely adopted in real-world LLM deployments.

4.1. Quantization

Quantization is a technique that reduces the numerical precision of model weights and activations, typically converting them from formats like FP16 or FP32 to lower-bit representations such as INT8 or INT4. This significantly decreases memory usage and bandwidth requirements, enabling large models to run on smaller GPUs while also improving inference speed.

In practice, quantization allows systems to load larger models into limited GPU memory and accelerate computation due to smaller data transfers. However, it introduces a trade-off between efficiency and accuracy, as lower precision may slightly degrade model quality.

Common approaches include:

- Post-Training Quantization (PTQ), which applies quantization without retraining the model

- GPTQ, which minimizes quantization error layer by layer

- AWQ, which preserves important weights based on activation sensitivity

4.2. Model parallelism and tensor parallelism

When a model is too large to fit into a single GPU, model parallelism is used to distribute computation across multiple devices. Tensor parallelism, a specific form of model parallelism, splits individual weight matrices across GPUs so that each device computes a portion of the operation and synchronizes results afterward.

This approach enables serving very large models, such as those with tens of billions of parameters, by leveraging multiple GPUs simultaneously. While it improves scalability, it also introduces communication overhead between devices, which can become a bottleneck if not carefully managed.

4.3. Continuous batching

Continuous batching is an advanced scheduling technique that improves GPU utilization by dynamically updating batches during inference. Instead of waiting for all requests in a batch to finish, the system replaces completed sequences with new incoming requests at each decoding step.

This approach avoids idle GPU time caused by variable-length outputs and maintains a consistently high level of resource utilization. As a result, it significantly increases throughput and reduces overall latency in real-world workloads.

4.4. Speculative decoding

Speculative decoding is an inference optimization technique that accelerates token generation by using a smaller, faster model to propose multiple tokens ahead, which are then verified by a larger model in a single forward pass.

This method reduces the number of expensive forward passes required by the main model, effectively breaking the sequential bottleneck of autoregressive generation. When the draft model’s predictions are accurate, multiple tokens can be accepted at once, yielding substantial speedups without changing the final output.

4.5. Inference frameworks

Inference frameworks provide optimized environments for serving LLMs at scale by integrating multiple techniques such as batching, caching, and parallelism. Popular frameworks like vLLM, TensorRT-LLM, and Hugging Face Text Generation Inference implement advanced optimizations such as continuous batching and efficient memory management.

These frameworks abstract away low-level complexity and allow developers to deploy high-performance inference systems more easily. They are designed to maximize hardware utilization while maintaining low latency and high throughput in production environments.

5. LLM Inference Use Cases

LLM inference is the driving force behind the AI applications that businesses rely on every day. Once a model is trained, the inference phase is what actually generates real-time value and interacts with end-users. Here is a breakdown of the most common LLM inference use cases across different industries:

| Use Case | Description & Practical Examples |

| Customer Support Chatbots | Powers intelligent virtual assistants that provide instant, human-like responses to routine user inquiries.

Example: An enterprise bot handling IT helpdesk tickets or an e-commerce assistant managing order tracking 24/7. |

| Content & Copy Generation | Accelerates the creation of text materials, freeing up human workers from repetitive writing tasks.

Example: Automatically drafting personalized sales emails, generating blog outlines, or creating thousands of product descriptions at scale. |

| Document Summarization | Condenses lengthy reports, legal contracts, or meeting transcripts into concise, actionable insights.

Example: A financial application summarizing 50-page quarterly earnings reports into key bullet points for analysts. |

| Information Extraction | Identifies and pulls specific, structured data points (like names, dates, or financial figures) from unstructured text.

Example: Processing thousands of incoming invoices to automatically extract pricing data, dates, and vendor details for accounting software. |

| Code Generation & Debugging | Acts as an AI pair programmer to assist software developers by suggesting code snippets or identifying syntax errors.

Example: Translating legacy software code into modern frameworks or rapidly writing routine boilerplate functions. |

| Real-time Translation | Breaks down language barriers by providing highly accurate, context-aware translations that go beyond simple word-for-word matching.

Example: Instantly translating live customer chat logs to allow a single support team to assist a global user base. |

6. FAQs

6.1. What is the difference between training and inference LLM?

LLM training is the process by which the model learns patterns, language structure, and knowledge from massive datasets, requiring large-scale computing, memory, and time. In contrast, inference is the phase where the trained model is used to generate outputs for user queries through forward-pass computation only, without updating its parameters.

6.2. What is the difference between LLM inference and rag?

LLM inference refers to the process of generating responses using only the knowledge already stored in the model’s parameters after training. In contrast, Retrieval-Augmented Generation (RAG) is an architectural approach that enhances inference by retrieving relevant external data in real time and injecting it into the prompt before generation.

6.3. Why is LLM Inference so expensive?

LLM inference is expensive because it requires significant computational resources for every single user request, especially when serving at scale. Each query involves running large neural networks on GPUs, maintaining memory-heavy structures such as KV cache, and handling high concurrency demands in real time.

Ultimately, answering the question of what is LLM inference reveals that it is the critical phase where trained AI models actually deliver real-world value. Whether you are deploying real-time chatbots, generating complex code, or translating languages on the fly, efficient inference is what keeps these applications responsive.

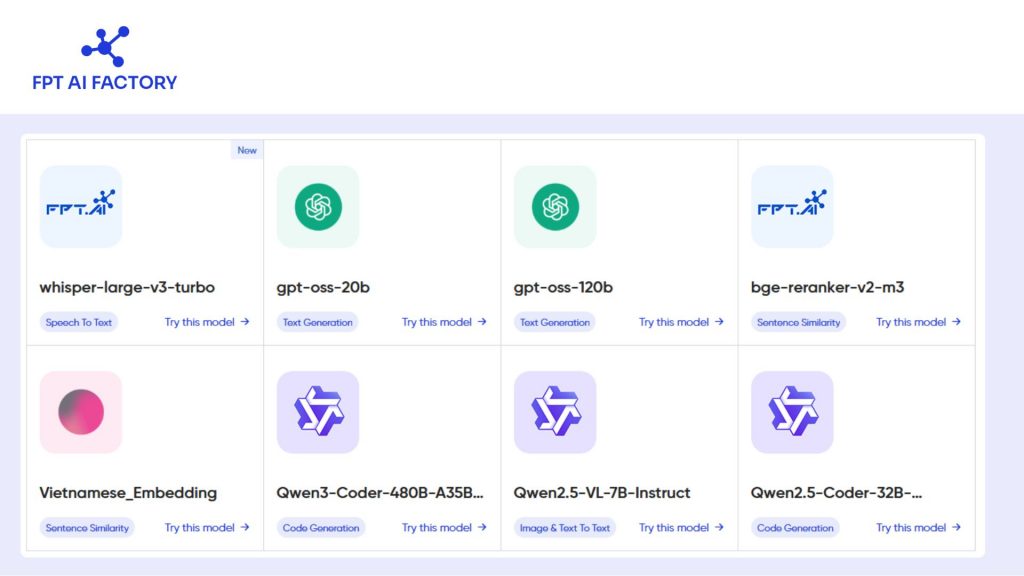

To help you experience our optimized environment, we are offering a Starter Plan with $100 in free credits for new users an be used instantly after logging in to explore the FPT AI Factory ecosystem for 30 days. This package is perfectly tailored for inference and development, including:

- $70 dedicated to AI Inference & AI Studio.

- $10 each for AI Notebooks, GPU Containers, and GPU Virtual Machines.

- Access to over 20+ advanced models, including up to 5M tokens with Llama-3.3.

For businesses with more advanced needs, such as customized solutions or large-scale deployments, let’s reach out via FPT AI Factory contact form. Our team will provide tailored consultation and support to match your specific requirements.

Contact information:

- Hotline: 1900 638 399

- Email: support@fptcloud.com