The rapid advancement of artificial intelligence (AI) is driving increasing demand for more powerful hardware, especially data center GPUs. NVIDIA H100 vs H200: Key GPU Differences and AI Power will help you better understand these two flagship GPUs. Understanding the differences between NVIDIA H100 and H200 enables businesses to choose the right solution, optimizing both performance and cost in AI deployment. This article FPT AI Factory will provide a detailed analysis to highlight the strengths of each GPU.

1. What is NVIDIA H100?

NVIDIA H100 GPU is a high-end data center GPU from NVIDIA, designed to accelerate AI, machine learning, deep learning, and high-performance computing (HPC) workloads. Based on the Hopper architecture, H100 delivers powerful performance for both training and inference, making it especially suitable for large AI models such as LLMs.

This GPU is commonly used by enterprises for:

- Training AI models

- Fine-tuning large language models

- Deploying AI at scale

- Processing complex data with high performance

NVIDIA H100 designed to accelerate AI, deep learning, and HPC workloads (Source: FPT AI Factory)

2. What is NVIDIA H200?

If the NVIDIA H100 is considered a powerful GPU for modern AI and HPC workloads, then the NVIDIA H200 is its next-generation upgrade, further optimized for large-scale AI tasks. Still based on the Hopper architecture, H200 stands out with higher memory capacity and bandwidth, enabling more efficient processing of demanding tasks such as training, fine-tuning, and inference for large language models (LLMs).

NVIDIA H200 builds on H100 with enhanced memory performance, efficient for demanding AI and LLM workloads (Source: FPT AI Factory)

3. Differences between H100 and H200

To better understand the differences between NVIDIA H100 and H200, it is important to examine key aspects such as architecture, memory, compatibility, and performance. These factors directly impact how AI workloads are processed and how suitable each GPU is for different use cases. The following sections provide a detailed breakdown of each criterion.

3.1. Architecture and tensor cores

At the core, both H100 and H200 are built on the Hopper architecture and are equipped with Tensor Cores; these are key components that accelerate AI workloads such as training, fine-tuning, and inference.

This means both GPUs are highly powerful for modern AI tasks. However, the H200 is further optimized to handle larger models and more complex workloads, making it more suitable for enterprise-scale environments.

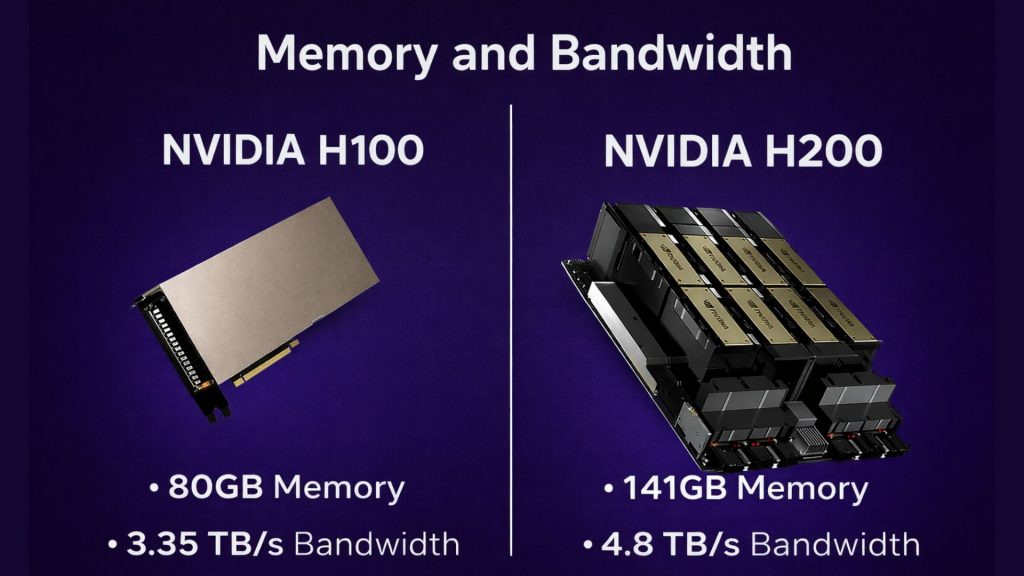

3.2. Memory and bandwidth

This is one of the most significant differences between NVIDIA H100 and NVIDIA H200. The H200 delivers major improvements in both memory capacity and memory bandwidth, enabling more efficient processing of large-scale AI models.

According to NVIDIA, H200 is the first GPU to use HBM3E, offering 141GB of memory and 4.8TB/s of bandwidth-nearly double the memory capacity of H100 and about 1.4 times higher bandwidth.

These advantages are especially important for enterprises running:

- Large Language Models (LLMs)

- Generative AI applications

- RAG (Retrieval-Augmented Generation) or multimodal AI

- Workloads that require loading and processing large datasets directly on the GPU

In simple terms, the larger and more complex the AI workload, the more advantage H200 has over H100.

H200 offers a major memory and bandwidth upgrade over H100 for large-scale AI workloads

3.3. Software compatibility

A major advantage is that both H100 and H200 belong to the same NVIDIA ecosystem, ensuring strong compatibility with most modern AI frameworks and development environments.

Enterprises can easily deploy on familiar tools such as:

- PyTorch

- TensorFlow

- vLLM

- Ollama

- Containerized environments and AI serving platforms

This makes it much easier to upgrade from H100 to H200 or run both GPUs in parallel without significant changes to the underlying software infrastructure.

3.4. Energy Efficiency

When comparing GPUs for enterprise use, energy efficiency is not only about power consumption but also about how effectively each workload is processed.

While both H100 and H200 are designed for high-performance AI and HPC environments, the H200 can deliver better efficiency for heavy workloads thanks to faster processing and reduced memory bottlenecks. NVIDIA also highlights that the H200 is engineered to provide better energy efficiency and a lower total cost of ownership for large-scale AI and HPC workloads.

For enterprises, this is particularly important when optimizing:

- Model training time

- Inference speed

- AI infrastructure operational efficiency

- Cost per workload processing

3.5. Performance

Overall, NVIDIA H100 remains a very powerful GPU and is fully capable of meeting most enterprise AI requirements. However, NVIDIA H200 demonstrates a clear advantage in workloads that require large memory, high bandwidth, and heavy data processing.

According to NVIDIA, the H200 can deliver higher inference performance for large models such as Llama 2 70B and GPT-3 175B, thanks to its larger memory capacity and improved data handling capabilities.

In simple terms:

- Choose H100 if you need a powerful, stable, and cost-balanced GPU

- Choose H200 if you work with large LLMs, generative AI, or high-scale enterprise workloads

Instead of investing directly in costly GPU infrastructure, enterprises can now access both NVIDIA H100 and NVIDIA H200 through GPU Container, this is a pre-built GPU server service designed to support AI needs from experimentation to real-world deployment.

This model offers the advantage of selecting the right GPU based on workload while significantly reducing AI infrastructure deployment time. According to FPT AI Factory, GPU Container enables fast setup, flexible pricing, support for multiple AI environments, and easy scalability as demands grow.

Key advantages of GPU Container include:

- Availability of both H100 and H200 options

- Fast deployment without upfront infrastructure investment

- Flexible resource scaling based on demand

- Suitable for training, fine-tuning, and inference

- Helps shorten experimentation time and accelerate AI adoption into production

GPU Container enables fast setup, flexible pricing, support for multiple AI environments, and easy scalability as demands grow (Source: FPT AI Factory)

4. Key specs comparison between H100 and H200

To better understand the differences between NVIDIA H100 and NVIDIA H200, enterprises should look at core specifications such as architecture, Tensor Cores, memory capacity, memory bandwidth, and Multi-Instance GPU (MIG) capabilities. These factors directly impact the efficiency of AI training, AI inference, LLMs, and HPC workloads.

| Criteria | H100 | H200 |

| Architecture | Hopper | Hopper (optimized) |

| Tensor Cores | Next-generation Tensor Cores, optimized for AI and HPC | Next-generation Tensor Cores, further improved for higher AI performance |

| Memory Capacity | Large, suitable for various AI workloads | Significantly larger, optimized for LLMs |

| Memory Bandwidth | High | Higher, reducing bottlenecks and accelerating processing |

| Multi-instance GPU (MIG) | Supports flexible GPU partitioning | Supports MIG with better optimization for multiple concurrent workloads |

5. H100 vs H200: Which one should you choose?

The choice between NVIDIA H100 Tensor Core GPU and NVIDIA H200 Tensor Core GPU depends on your workload scale, budget, and performance requirements. Each GPU is suited for different needs, ranging from basic AI deployment to large-scale LLM systems.

5.1. Choose H100 if you need balanced AI performance

- Suitable for small and medium-sized enterprises

- Optimizes deployment and operational costs

- Performs well in common AI tasks such as chatbots, natural language processing, and data analytics

- Offers a balance between performance, cost, and availability

H100 is a suitable choice when you need a powerful GPU while still optimizing your budget and ensuring easy deployment across various use cases.

5.2. Choose H200 if you work with large-scale LLMs

- Suitable for large enterprises and large-scale AI systems

- Designed for large language models (LLMs) or workloads requiring high memory and bandwidth

- Optimized for training and inference of complex models

- Ensures high performance and strong scalability

H200 is the optimal choice when handling complex AI workloads that require superior performance and stable operation at scale.

Both NVIDIA H100 Tensor Core GPU and NVIDIA H200 Tensor Core GPU are powerful solutions designed to meet modern AI demands. H100 provides balanced performance and cost efficiency, making it suitable for a wide range of use cases, while H200 excels with higher memory and bandwidth, making it ideal for large LLMs and heavy workloads.

You can begin quickly with the Starter Plan from FPT AI Factory, which grants 100 dollars in free credits. These credits are available immediately after you sign up, so you can log in and start using the platform without any delay. The plan gives you enough capacity to explore Prompt Engineering and Fine tuning with models such as Llama 3.3, allowing you to develop, validate, and refine AI solutions without initial cost.

If your business or organization is looking for tailored solutions or planning deployment at a larger scale, please reach out to FPT AI Factory via the contact form. Our team will work with you to provide consultation and support aligned with your specific requirements.

Contact Information:

- Hotline: 1900 638 399

- Email: support@fptcloud.com