What is Physical AI? Physical AI marks the shift of artificial intelligence from digital environments into the real world, enabling machines to perceive, reason, and act through robots, autonomous systems, and industrial devices. These applications require massive computational power for simulation, synthetic data generation, and large-scale model training. In this context, infrastructure platforms like FPT AI Factory play a critical role by providing high-performance GPU clusters to accelerate the development and deployment of Physical AI systems.

1. What is Physical AI?

Physical AI refers to artificial intelligence systems that can perceive, understand, reason, and act in the real physical world, not just process data on a screen.

While traditional AI focuses on text, images, and digital information, Physical AI goes a step further by connecting AI models with sensors, cameras, robots, and machines. This allows systems to observe their surroundings and take meaningful actions in response. In this way, Physical AI acts as a bridge between software intelligence and the physical world of movement, objects, and real-world interactions.

Before the emergence of Physical AI, machines operated based on fixed instructions. For example, a factory robot could weld the same point repeatedly, and early robot vacuums followed preset paths without deviation. These systems were efficient but lacked the ability to adapt.

With Physical AI, however, machines become far more flexible and responsive. They can detect obstacles and reroute automatically, handle unfamiliar objects without prior programming, and react to changes in real time. Instead of simply following predefined scripts, they can understand their environment and adjust their behavior accordingly.

Physical AI refers to artificial intelligence systems that can perceive, understand, and interact with the real world

2. Why does Physical AI matter?

Current AI trends are rapidly shifting toward AI Reasoning, Agentic AI, and Physical AI, driving more complex and compute-intensive inference workloads. These advanced use cases require specialized infrastructure, powered by high-performance GPU systems to ensure scalability, speed, and reliability. Physical AI allows machines to perceive their surroundings, reason about what’s happening, and adapt their actions in real time.

To meet this growing demand, FPT is now opening pre-orders for NVIDIA HGX B300 GPU Cloud, enabling businesses to access next-generation GPU infrastructure optimized for large-scale AI inference and deployment.

Traditional automation depends on predefined rules, making it unreliable when facing variability such as human interaction, changing objects, or shifting environments. In contrast, Physical AI enables continuous perception and instant decision-making, helping machines operate more flexibly without rigid scripts.

By handling uncertainty, Physical AI expands automation into complex, unstructured environments like warehouses, hospitals, and urban systems. It also supports tasks that require precision and context, such as manipulating irregular objects or navigating shared spaces where rule-based systems fall short.

Overall, Physical AI improves efficiency by reducing errors, minimizing human intervention, and enhancing safety. More importantly, it enables true autonomy, where machines can intelligently interact with and respond to the physical world.

Physical AI matters because it allows machines to adapt and act intelligently in real-world environments

3. Physical AI Technology Stack

Physical AI does not run on a single technology. It is a coordinated stack of specialized systems, each handling a distinct layer, from sensing the world to acting in it.

3.1. Sensors and Perception Systems

Sensors are the eyes and ears of any Physical AI system. Cameras, lidar, radar, depth sensors, and IMUs continuously feed raw data about the surrounding environment, object positions, distances, motion, and spatial layout.

For example, a warehouse robot uses lidar to detect a human walking into its path and stops within milliseconds. Without accurate, real-time perception, no downstream reasoning or action is possible.

3.2. Simulation and Synthetic Data

Training Physical AI in the real world is slow, expensive, and dangerous. Simulation environments replicate real-world physics digitally, allowing machines to train across millions of scenarios, different lighting conditions, object configurations, human behaviors at a fraction of the cost.

For instance, a self-driving car can experience thousands of near-miss pedestrian scenarios in simulation before encountering a single one on a real road. Synthetic data generated from these simulations fills the gaps that real-world datasets cannot cover safely or at scale.

3.3. AI Models and Decision-Making

This is the reasoning layer. Foundation models, including vision-language models (VLMs) and vision-language-action models (VLAs), process multimodal inputs from sensors and determine what action to take next.

For example, a humanoid robot uses a VLA model to interpret a spoken instruction like “pick up the blue box” and translate it into a sequence of physical actions, locating the object, planning the reach, and executing the grip. These models combine general world knowledge with task-specific training, enabling machines to handle both familiar and unfamiliar situations.

The reasoning layer processes sensor data to decide actions and guide intelligent behavior. (Source: Math Works)

3.4. Robotics and Control Systems

Once a decision is made, it must be translated into precise physical movement. Control systems convert model outputs into motor commands, managing joint angles, grip force, speed, and balance in real time.

For instance, a surgical robot adjusts its tool pressure mid-procedure based on live tissue resistance feedback, far beyond what any pre-programmed script could handle. This layer bridges the gap between digital intelligence and physical action.

3.5. Edge Computing and Deployment Systems

Physical AI systems cannot rely on a distant cloud server for every decision, latency would make real-time response impossible. Edge computing brings processing power directly onto the robot or vehicle, enabling millisecond inference locally.

For example, an autonomous forklift processes obstacle detection and path adjustments entirely onboard, without waiting for a cloud response. Deployment systems then manage the full fleet: pushing model updates over the air, monitoring performance, and maintaining reliable operation even in low-connectivity environments.

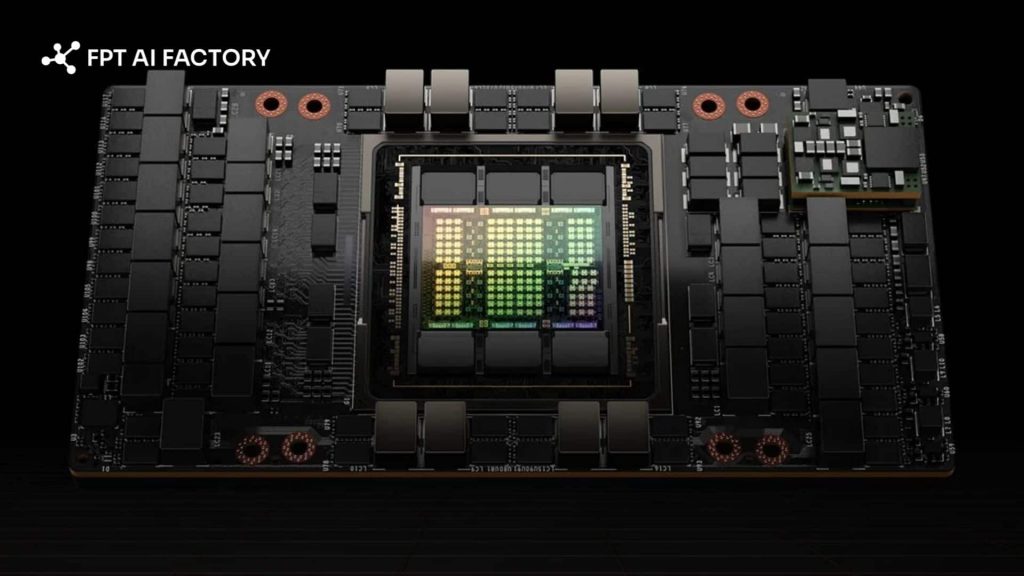

3.6. GPU Infrastructure for Training and Simulation

Training Physical AI models and running high-fidelity simulations require enormous computational power. GPU clusters handle the parallel processing demands of reinforcement learning, large model training, and physics-based simulation at scale.

For example, training a single robot manipulation policy may require running tens of millions of simulated trials, a workload that would take months on standard hardware but completes in days on a GPU cluster. This infrastructure is what makes Physical AI development practically feasible.

GPU infrastructure enables large-scale training and simulation for Physical AI (Source: FPT AI Factory)

4. How Physical AI works

Traditional AI models can process text and images effectively, but they lack an understanding of the physical world. They cannot accurately judge distance, predict movement, or interact with objects in three-dimensional space. Physical AI is designed to close this gap.

Physical AI extends generative AI with spatial understanding and physical reasoning, enabling machines to not only recognize objects but also understand their position, movement, and interactions in the real world.

To operate, Physical AI systems rely on multimodal inputs, including:

- Visual data from cameras and sensors

- Motion and spatial data from LiDAR or IMUs

- Language inputs for instructions and context

- Real-time operational data such as speed or force

These inputs are processed in a continuous loop: observe → interpret → decide → act → learn, allowing systems to adapt dynamically.

Training is typically done in physics-based simulations, where machines can safely run millions of scenarios. Using reinforcement learning, systems improve through trial and error, then are deployed in real environments and continuously refined with real-world data.

Because simulation and model training require massive computational power, GPU infrastructure plays a critical role in scaling and accelerating Physical AI development.

For organizations building Physical AI applications in Japan and Southeast Asia, the GPU Cluster of FPT AI Factory provides the robust compute infrastructure needed for these workloads, simulation at scale, synthetic data generation, and large model training, without the capital cost of building dedicated hardware. Teams can run the intensive training cycles that Physical AI requires, then deploy optimized models to edge devices for real-time operation in the field.

GPU cluster of FPT AI Factory (Source: FPT AI Factory)

5. Common Physical AI use cases

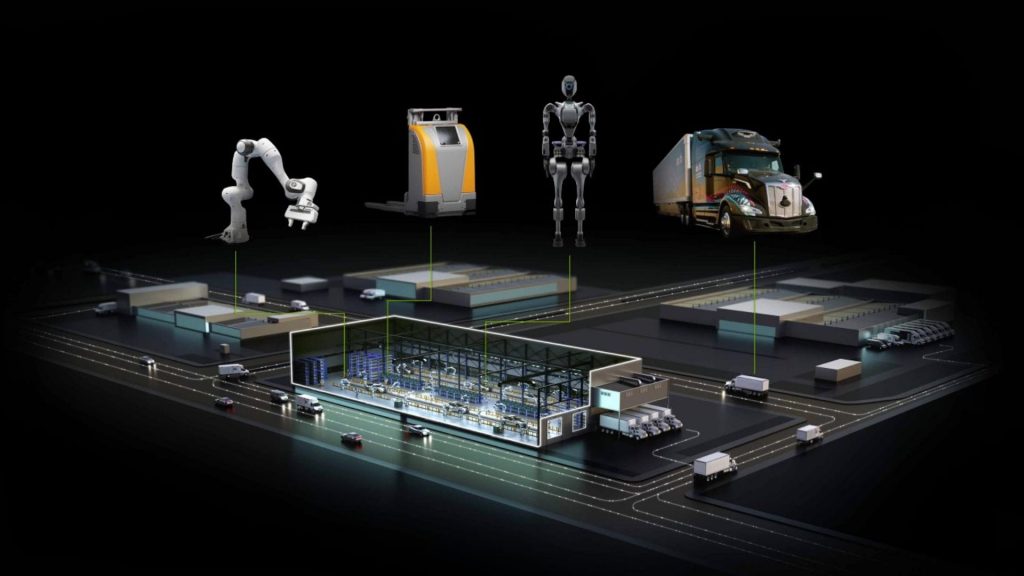

5.1. Robotics and Warehouse Automation

Physical AI transforms robots from rigid, pre-programmed machines into adaptive systems that respond to real conditions. In warehouses and factories, this shift is already operational:

- Robot arms read the position and orientation of objects on a conveyor belt and adjust grip accordingly, no reprogramming needed when the object changes

- Autonomous mobile robots (AMRs) navigate dynamically around humans and other machines using live sensor feedback, making them deployable in environments that traditional fixed-path robots cannot handle

- Surgical robots are trained in simulation to perform intricate tasks, threading a needle, adjusting suture tension, before operating in real conditions, bringing specialist-level precision to more procedures and locations

5.2. Autonomous Vehicles and Machines

Physical AI is the core technology behind any vehicle or machine that must navigate the real world independently:

- Autonomous vehicles process camera, lidar, and radar data continuously to understand their surroundings and make split-second decisions

- Vision-language-action (VLA) models combine perception and reasoning to handle the full range of real driving conditions: pedestrians stepping off curbs, construction zones, rain, sudden stops

- Beyond road vehicles, the same principles apply to autonomous forklifts, agricultural machines, and mining equipment operating in unpredictable outdoor environments

Physical AI powers machines and vehicles to navigate and operate autonomously in the real world. (Source: Freepik)

5.3. Industrial Inspection and Real-World AI Agents

Physical AI extends beyond moving robots into fixed intelligent systems that monitor and manage complex environments:

- Fixed cameras combined with computer vision models track people, vehicles, and robots simultaneously across large indoor and outdoor facilities

- Teams can monitor safety, optimize routes dynamically, and detect anomalies in real time without human operators watching every feed

- In industrial settings, AI agents inspect equipment for defects, measure wear on components, and flag maintenance needs automatically, reducing downtime and replacing manual inspection in hazardous areas

6. Key challenges in Physical AI

Building Physical AI systems is significantly harder than building software AI. The real world does not offer clean data, controlled conditions, or the ability to undo a mistake.

6.1. Safety, Reliability, and Edge-Case Handling

In most AI applications, a wrong prediction produces a bad output. In Physical AI, it can damage equipment, injure people, or cause accidents. The stakes of failure are physical and immediate.

- Edge cases are unavoidable: no simulation covers every real-world scenario, and systems must handle situations they have never encountered during training

- Speed and accuracy must be balanced simultaneously in autonomous vehicles and industrial robots, a slow but correct decision can be just as dangerous as a fast wrong one

- Maintaining consistent performance across varying conditions, different lighting, temperature, wear on hardware, new object types requires continuous monitoring and retraining

- Regulatory and liability frameworks for autonomous physical systems are still evolving, adding uncertainty to deployment decisions

6.2. Training in Complex Physical Environments

Unlike language models that train on static text datasets, Physical AI requires machines to interact with dynamic, unpredictable environments and that interaction is expensive to generate at scale.

- Real-world data collection is slow: every data point requires a physical machine to move, interact, and respond in continuous time

- Physics is hard to replicate perfectly: gravity, friction, surface texture, lighting, and sensor noise all vary in ways that simulation does not always capture accurately

- The sim-to-real gap remains a core problem, models that perform well in simulation frequently fail when deployed to real hardware, requiring additional fine-tuning that can be costly and time-consuming

- Rare but critical scenarios, a robot dropping a fragile object, a vehicle encountering black ice are difficult to generate in sufficient volume for robust training

Training Physical AI is challenging because it requires costly real-world data, imperfect simulations (Source: Freepik)

6.3. Deployment Across Cloud, Edge, and Real-World Systems

Training a Physical AI model is only half the challenge. Getting it to run reliably on real hardware, at real-world speed, across diverse environments is where many projects stall.

- Edge hardware has limited compute: models trained on powerful GPU clusters must be compressed and optimized to run on onboard processors without sacrificing critical response time

- Latency is non-negotiable: a self-driving car or surgical robot cannot wait for a cloud server to respond, inference must happen locally in milliseconds

- Fleet management at scale introduces complexity: pushing model updates, monitoring performance, and maintaining security across hundreds or thousands of deployed devices requires robust infrastructure

- Hybrid cloud-to-edge architectures must be designed carefully so that training workloads, simulation, and real-time inference each run in the right environment without creating bottlenecks

7. Physical AI vs. Generative AI

Physical AI and Generative AI share the same foundation: both are built on large neural networks trained on massive datasets, and both use that training to interpret inputs and produce intelligent outputs. In many Physical AI systems, generative AI models serve as the reasoning core for processing language instructions, interpreting visual scenes, or planning action sequences. The two are not competing approaches; Physical AI is built on top of generative AI and extends it into a new domain.

The difference lies in what they are designed to do with that intelligence.

| Aspect | Generative AI | Physical AI |

| Main goal | Generate content, text, images, code, audio | Perceive, reason, and act in the physical world |

| Output type | Digital content: words, images, video, code | Physical actions: movement, manipulation, navigation |

| Interaction with the physical world | None, operates entirely in digital environments | Direct, sensors in, actuators out |

| Typical training data | Text, images, audio scraped from the internet | Sensor data, simulation runs, real-world robot interactions |

| Deployment environment | Cloud servers, browsers, applications | Edge devices, robots, vehicles, embedded hardware |

| Common use cases | Chatbots, content creation, coding assistants, search | Autonomous vehicles, industrial robots, smart facilities |

As Physical AI continues to evolve, organizations are moving beyond digital-only AI systems toward machines that can perceive, reason, and interact with the real world. Successfully building these systems requires not only advanced AI models, but also scalable infrastructure capable of supporting simulation, training, deployment, and real-time inference at scale.

To summarize:

- Physical AI enables machines to perceive, reason, and act in real-world environments instead of operating only in digital spaces.

- Unlike traditional automation, Physical AI systems can adapt dynamically to changing environments and unexpected situations.

- Physical AI relies on a complete technology stack, including sensors, simulation systems, AI models, robotics, edge computing, and GPU infrastructure.

- Training Physical AI models requires large-scale simulation, synthetic data generation, and high-performance GPU clusters.

- Common use cases include robotics, warehouse automation, autonomous vehicles, industrial inspection, and intelligent real-world monitoring systems.

- Key challenges include safety, edge-case handling, sim-to-real transfer, latency requirements, and deployment complexity across cloud and edge environments.

- Physical AI extends generative AI into the physical world by combining reasoning capabilities with real-world perception and action.

- Platforms like FPT AI Factory help organizations accelerate Physical AI development through scalable GPU infrastructure, AI development tools, and deployment-ready environments.

Platforms like FPT AI Factory go beyond GPU compute, offering a full AI ecosystem, from GPU clusters and development environments to deployment tools and managed services, enabling end-to-end AI development while reducing complexity and accelerating time-to-market.

To get started, FPT AI Factory provides a $100 free credit for new users, giving you access to GPU container, GPU virtual machine, AI notebooks, Serverless Inference, and AI Studio, making it easier to experiment Physical AI solutions without upfront infrastructure investment. Individuals will receive $100 in credits upon registration, which can be used immediately after logging in, no setup or approval process required, so you can start building and experimenting right away.

For businesses with more advanced needs, such as customized solutions or large-scale deployments, we recommend reaching out via our contact FPT AI Factory. Our team will provide tailored consultation and support to match your specific requirements.

Contact information:

Hotline: 1900 638 399

Email: support@fptcloud.com

Explore related articles:

Agentic AI vs Generative AI: Key Differences Explained

What is AI inference? How it works, types, and use cases