What is Nvidia Blackwell, and why is it being hailed as the engine of the new industrial revolution? This groundbreaking architecture redefines the limits of generative AI, offering unprecedented efficiency and computing density for complex workloads. By integrating these cutting-edge chips into our sovereign cloud, FPT AI Factory enables organizations to stay at the forefront of innovation with high-performance infrastructure tailored for the future of AI.

1. What is Nvidia Blackwell?

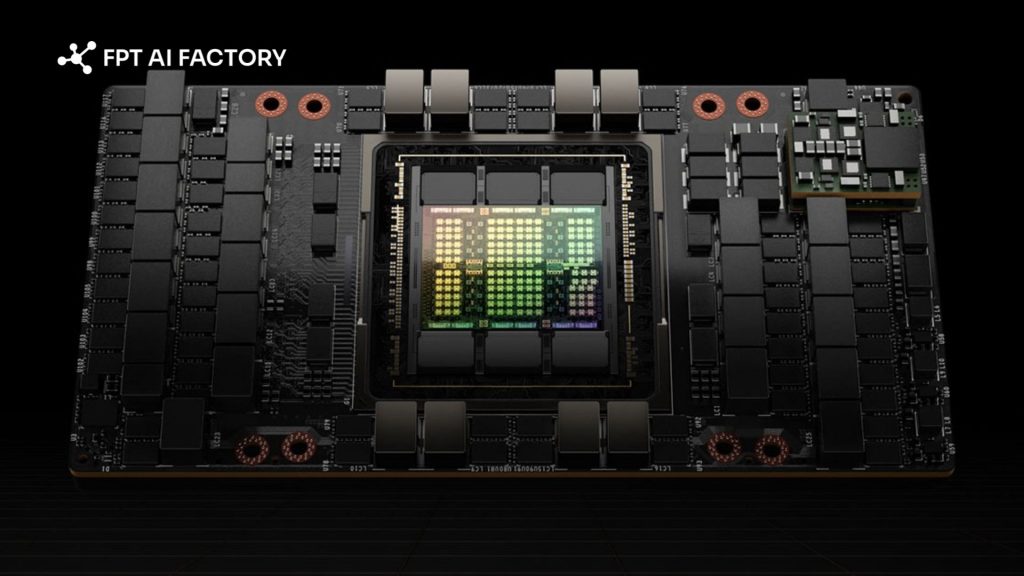

NVIDIA Blackwell refers to a new generation of GPU architecture and data center platform designed primarily for AI and cloud computing workloads. In this context, Blackwell focuses on enterprise-grade systems such as B100, B200, and GB200, not consumer GPUs for gaming like RTX 5090 or 5080.

At its core, Blackwell is built to power modern AI use cases, including large-scale model training, real-time inference, and data center operations. It succeeds the previous Hopper architecture and introduces significant improvements in compute performance, memory bandwidth, and scalability for AI-driven applications.

Unlike gaming GPUs, which prioritize graphics rendering, Blackwell GPUs are optimized for handling massive datasets and complex neural networks. They are widely adopted by cloud providers and enterprises to run large language models, generative AI systems, and high-performance computing workloads.

NVIDIA Blackwell refers to a new generation of GPU architecture (Source: FPT AI Factory)

2. Key benefits and limitations of NVIDIA Blackwell

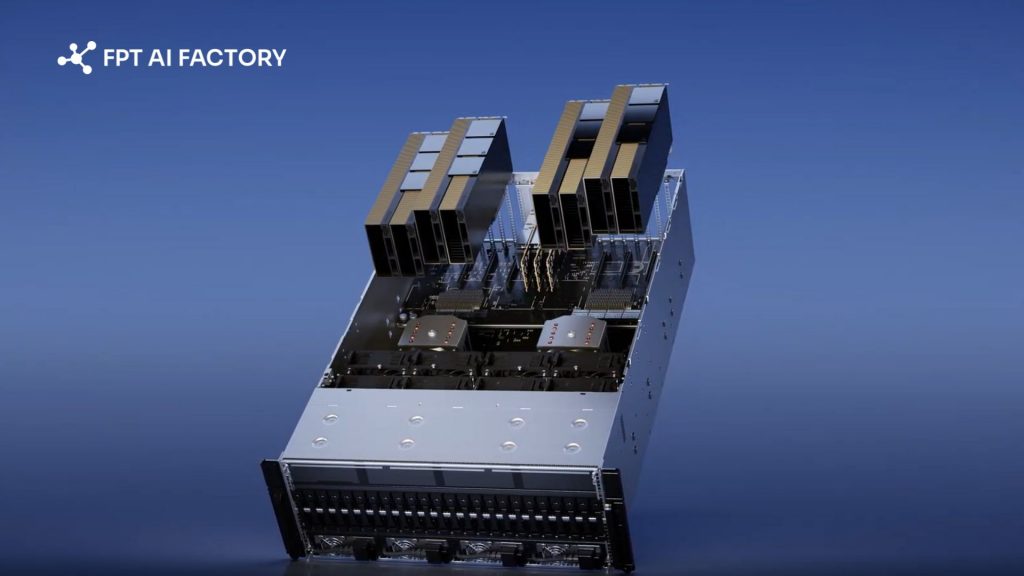

NVIDIA Blackwell is designed to push the boundaries of AI computing, especially for large-scale training and inference. However, like any advanced platform, it comes with both clear advantages and practical trade-offs that organizations need to consider.

2.1. Benefits of NVIDIA Blackwell

Blackwell introduces several improvements that directly address the growing demands of modern AI workloads, particularly large language models and generative AI systems.

- Significantly higher AI performance: Blackwell GPUs deliver much higher compute throughput compared to previous generations, enabling faster training and inference for large-scale models.

- Optimised for large-scale AI workloads: The architecture is purpose-built for handling massive datasets and complex neural networks, making it ideal for LLMs, generative AI, and enterprise AI applications.

- Improved scalability with advanced interconnects: With technologies like fifth-generation NVLink, Blackwell enables efficient communication between GPUs, allowing clusters to scale to hundreds of GPUs with minimal bottlenecks.

- High-bandwidth memory for large models: Support for HBM3e memory with multi-terabyte-per-second bandwidth allows GPUs to handle larger models, bigger batch sizes, and longer context windows without relying on slower system memory.

- Better energy efficiency for AI workloads: Despite higher power consumption, Blackwell improves performance-per-watt significantly, especially for inference tasks, reducing overall cost per AI output.

- Flexible resource utilisation (virtualisation support): Features like Multi-Instance GPU (MIG) allow organisations to partition GPU resources efficiently, improving utilisation across multiple workloads.

2.2. Considerations before choosing NVIDIA Blackwell

While Blackwell offers strong performance gains, it also introduces several challenges, particularly in infrastructure, cost, and deployment complexity.

- High power consumption and cooling requirements: Blackwell GPUs can require up to ~1,000W per unit and often need liquid cooling systems, making them demanding in terms of data centre infrastructure.

- Not compatible with standard GPU servers: These GPUs use specialised form factors and require purpose-built AI systems like HGX or DGX platforms, limiting upgrade flexibility.

- High upfront investment: The cost of hardware, infrastructure upgrades, and deployment can be significant, especially for organizations building large-scale GPU clusters.

- Complex deployment and operations: Managing large GPU clusters with advanced interconnects and distributed workloads requires specialized expertise in AI infrastructure and system optimization.

- Diminishing returns without optimized software: To fully utilize Blackwell’s capabilities, software stacks must be optimized. Without proper tuning, some compute resources may remain underutilized.

- Less optimized for traditional HPC (non-AI workloads): The architecture prioritizes AI-specific precision formats (e.g., FP4, FP8), which may not deliver the same benefits for workloads requiring high FP64 performance.

NVIDIA Blackwell is designed to push the boundaries of AI computing (Source: FPT AI Factory)

3. Use Cases for Blackwell GPUs

NVIDIA Blackwell is designed to support a wide range of AI and data-intensive workloads, from model training to real-world deployment. Below are the most common applications where Blackwell delivers strong value.

3.1. LLM Training and Fine-Tuning

NVIDIA Blackwell is highly optimized for training and fine-tuning large language models (LLMs), especially at extreme scale. Its architecture is designed to handle trillion-parameter models and complex AI pipelines that require massive compute and memory bandwidth.

With improvements in throughput and multi-GPU communication, Blackwell enables faster model training cycles and more efficient fine-tuning. This is particularly important for enterprises building domain-specific AI models or continuously updating models with new data.

Ultimately, this provides engineering teams with the ability to:

- Complete the training of complex AI architectures significantly faster.

- Optimize resource management across multi-stage AI operations.

- Scale up to thousands of interconnected GPUs while maintaining peak system stability.

3.2. Data centres and cloud computing

Blackwell is built as a data center–first platform, powering AI infrastructure at scale across cloud and enterprise environments.

One common deployment model is through GPU Virtual Machines, where users can access Blackwell GPUs on demand without managing physical infrastructure. This allows teams to quickly spin up environments for training, inference, or experimentation.

In Asia, FPT AI Factory is among the early providers offering GPU Virtual Machine services powered by Blackwell (including B300), with flexible pricing and scalable packages for both individuals and enterprises. Practically, this model allows enterprises to:

- Lower financial barriers by bypassing costly on-premise equipment investments.

- Scale infrastructure up or down seamlessly as workload requirements change.

- Significantly shorten the deployment lifecycle for AI-driven solutions.

3.3. Big data and high-performance computing workloads

Beyond AI, Blackwell also supports big data processing and high-performance computing (HPC) workloads. Its architecture is designed to handle both AI-driven and traditional simulation-based tasks within the same environment. Therefore, organizations can leverage this setup for:

- Intensive research simulations in fields like energy and climatology.

- Managing and extracting insights from massive datasets efficiently.

- Blending machine learning models with traditional computing simulations to drive innovation.

By integrating AI acceleration with HPC capabilities, Blackwell enables faster insights and more complex computations across industries.

3.4. Physical AI and robotics

Blackwell also plays a key role in the development of physical AI – AI systems embedded in real-world machines like robots, autonomous systems, and smart factories. In robotics workflows, Blackwell-based systems are used across three stages:

- Training AI models at scale within high-performance data center environments

- Recreating real-world conditions through digital twin simulations

- Running AI models on robots to enable real-time actions and decision-making

This end-to-end capability allows developers to build more adaptive and intelligent robotic systems, especially in industries like manufacturing, logistics, and mobility.

3.5. Large-scale inference and LLM serving

Blackwell is particularly strong in large-scale inference, running AI models in production to serve real users. As models grow more complex, inference becomes one of the most resource-intensive parts of the AI lifecycle. With its optimized architecture, Blackwell can deliver higher throughput and lower cost per token when serving LLMs at scale.

For inference workloads and LLM serving, the standout benefits include:

- Handling high token throughput: This ensures fluid, real-time interactions for demanding applications like virtual assistants and intelligent AI chatbots.

- Minimizing system latency: Drastically reduced response times help deliver a noticeably faster and more engaging experience for the end user.

- Driving cost efficiency: It optimizes infrastructure expenses per request, making large-scale enterprise deployments much more financially sustainable.

This makes Blackwell a strong fit for enterprises deploying AI services to large user bases, where performance and cost per request are critical.

NVIDIA Blackwell is designed to support a wide range of AI and data-intensive workloads (Source: FPT AI Factory)

4. FAQs

4.1. What is the difference between NVIDIA Blackwell and Hopper?

Blackwell is a newer architecture optimized for large-scale AI (especially LLMs and generative AI), offering higher performance, larger memory, and faster interconnects than Hopper, which remains more mature and widely used for mixed AI and HPC workloads.

4.2. Which Blackwell GPU should I choose for my workload?

You should choose B200 or B300 for large-scale training and inference of massive models, while B100 is more suitable for enterprise workloads that need strong performance with better cost efficiency.

4.3. How much does 1 NVIDIA Blackwell cost?

A single Blackwell GPU typically costs around $30,000–$40,000, depending on the model, while cloud-based usage is priced hourly based on provider and configuration.

To get you up and running quickly, we provide a Starter Plan that includes $100 in free credits for new users to explore the FPT AI Factory ecosystem over 30 days. Once you register, the full $100 credit is instantly available and ready to use right after login, no setup steps or approval needed, so you can begin building and experimenting immediately. If you are an enterprise or organization with requirements for customization or large-scale deployment, please reach out to FPT AI Factory through the official contact form to receive dedicated consultation and tailored solutions.

In summary, understanding what is Nvidia Blackwell helps businesses better evaluate how next-generation GPU platforms can support their AI strategy, from large-scale model training to real-time inference in production. With its focus on performance, scalability, and AI-first architecture, Blackwell is becoming a key foundation for modern data centres and AI-driven applications. If you’re exploring how to adopt Blackwell GPUs or want to experience their capabilities through flexible GPU Virtual Machine solutions, now is a great time to get started. Contact FPT AI Factory to receive consultation immediately!

Contact information:

Hotline: 1900 638 399

Email: support@fptcloud.com

>> Read more:

A100 vs H100: Which GPU is better for AI workloads?

B200 vs B300: Which Blackwell GPU should you choose?