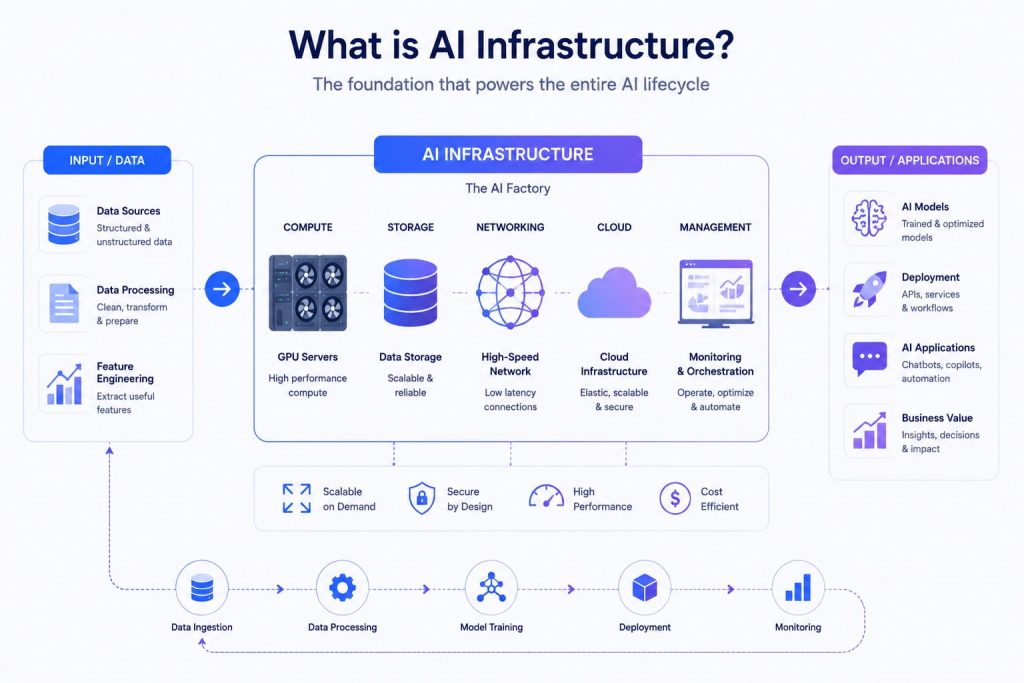

What is AI infrastructure and why does it matter for modern businesses? As AI adoption continues to grow, having the right foundation is essential to support everything from data processing to model deployment. With FPT AI Factory, organizations can leverage a complete ecosystem to build and scale AI applications more efficiently.

1. What is AI infrastructure?

AI infrastructure is the foundation used to build, train, deploy, and manage AI applications. It combines compute resources, data systems, networking, software frameworks, and operational tools to support AI workloads such as machine learning, deep learning, generative AI, and real-time inference. It also enables an AI Factory to operate at scale, turning data and models into deployable intelligence.

For example, when a company builds an AI chatbot, the infrastructure must support multiple stages including storing customer data, training or fine-tuning models with GPU resources, deploying the chatbot into production, and monitoring performance over time. Platforms such as FPT AI Factory combine GPU compute, AI development environments, and inference services into one ecosystem, helping developers and businesses build and scale AI applications more efficiently.

Data center servers powering scalable AI infrastructure and processing

2. Why AI needs infrastructure

AI systems require more than just powerful algorithms to function effectively. Behind every successful AI application is a robust infrastructure that supports not only model training but the entire lifecycle—from data processing to deployment and continuous optimization. Without proper infrastructure, AI models cannot scale, perform reliably, or deliver real-world value.

- Support for the full AI lifecycle: Infrastructure is needed not only for training models but also for data ingestion, preprocessing, deployment, and monitoring

- Handling large-scale data: AI relies on massive datasets that require strong storage and processing capabilities

- Computational power for complex models: Advanced models, especially deep learning and generative AI, demand high-performance computing resources

- Scalability and flexibility: Infrastructure allows AI systems to scale as workloads and data grow over time

- Reliable deployment and operation: Ensures models can run smoothly in real-world environments with consistent performance

In short, AI infrastructure is essential because AI is not a one-time process. From building and training to deploying and maintaining models, every stage depends on a stable and scalable infrastructure to function effectively.

>>> Explore: GPU Virtual Machine is Now Available with On-Demand Pricing on FPT AI Factory

3. Key Components of AI Infrastructure

To fully understand how AI infrastructure operates, it is important to break down its core components. Each element plays a specific role in ensuring that AI systems run efficiently and scale effectively.

3.1. Compute resources

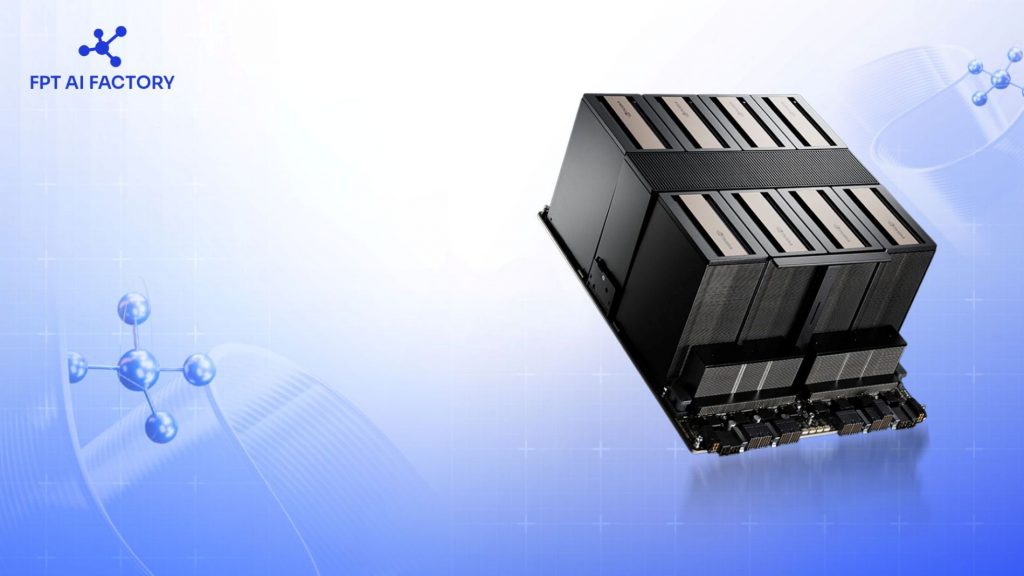

Compute resources are the backbone of AI infrastructure, providing the high-performance computing power needed to train, fine-tune, and run machine learning models. GPUs are especially important due to their ability to handle parallel processing, making them ideal for complex AI workloads such as deep learning. Modern AI systems increasingly use on-demand compute to improve flexibility and cost efficiency. For example, FPT AI Factory’s GPU Virtual Machine offers an on-demand GPU layer for training, fine-tuning, and scalable experimentation. This allows businesses to scale resources dynamically without large upfront investment.

Advanced computing infrastructure supports scalable AI development and deployment. (Source: FPT AI Factory)

3.2. Data storage and processing

AI systems rely on large volumes of structured and unstructured data, making efficient storage and processing essential. Data must be collected, cleaned, and transformed before it can be used for model training. This often involves data lakes, data warehouses, and both batch and real-time processing pipelines. A strong data layer ensures high data quality and accessibility, which directly impacts model performance. Without proper data infrastructure, AI systems cannot deliver accurate and reliable results.

3.3. Networking and connectivity

Networking plays a critical role in ensuring seamless communication between different components of AI infrastructure. It enables fast and reliable data transfer between storage systems, compute resources, and applications. Low latency and high bandwidth are especially important in distributed environments where workloads are processed across multiple nodes. This is essential for use cases such as distributed training and real-time AI applications. Efficient networking helps maintain performance and reduce delays in data-intensive operations.

3.4. Machine learning frameworks

Machine learning frameworks provide the tools and libraries needed to build, train, and evaluate AI models. Popular frameworks such as TensorFlow and PyTorch support different learning approaches and help optimize model performance. They also offer pre-built components that simplify development and reduce time-to-market. By using these frameworks, teams can experiment faster and iterate more efficiently. This plays a key role in accelerating AI adoption within organizations.

Machine learning frameworks are often used inside shared development environments where teams can build, test, and manage AI models more easily. For multi-user AI development, you can also explore how JupyterHub supports collaborative notebook-based workflows.

Machine learning frameworks streamline AI model development and iteration

3.5. MLOps and orchestration tools

MLOps platforms help manage the full lifecycle of AI models, from development to deployment and monitoring. They enable automation, version control, and collaboration between data scientists and engineers. These tools also support continuous integration and deployment (CI/CD), ensuring consistency across environments. In addition, MLOps helps monitor model performance and detect issues such as model drift over time. This allows organizations to maintain reliable and scalable AI systems in production.

4. How AI Infrastructure Works

4.1. Collect and prepare data

The process starts by gathering data from databases, applications, sensors, or external sources. This data is cleaned, organized, and structured to suit machine learning models. Preparation may include labeling, filtering noise, and normalizing inputs. A well-designed data pipeline ensures high-quality data, which is essential for accurate and reliable AI outcomes.

4.2. Train models with compute resources

Prepared data is used to train machine learning and deep learning models. This requires substantial computational power, often provided by GPUs or specialized hardware capable of parallel processing. Algorithms learn patterns from the data and continuously adjust parameters to improve performance. Scalable infrastructure allows teams to experiment with models and optimize results efficiently.

4.3. Deploy models to production

After training, AI models are deployed to production to generate predictions and support real-world applications. A robust infrastructure ensures deployments remain stable, efficient, and scalable across different environments. FPT AI Factory’s Serverless Inference helps businesses bring models into production faster through a fully managed serving solution. It enables on-demand inference processing, automatically scaling based on usage without requiring teams to manage complex infrastructure. With built-in APIs and monitoring tools, organizations can maintain performance and reliability over time.

Serverless Inference delivers flexible scaling easy integration and cost efficiency (Source: FPT AI Factory)

4.4. Monitor systems and update models over time

Continuous monitoring tracks key metrics like accuracy, latency, and system stability. Models can be retrained or updated as new data arrives or performance changes. This feedback loop ensures AI applications remain reliable, scalable, and aligned with evolving business needs, maintaining long-term effectiveness.

5. Difference between AI infrastructure vs. IT infrastructure

To effectively support AI initiatives, it is essential to recognize that AI infrastructure differs from traditional IT infrastructure, since each is designed for distinct workloads and performance requirements.

| Feature | AI Infrastructure | IT Infrastructure |

| Purpose | Built specifically for AI development, training, deployment, and continuous optimization | Designed to support general IT operations and enterprise applications |

| Compute | Uses GPUs and specialized hardware for parallel processing and high-performance workloads | Relies mainly on CPUs for standard computing tasks |

| Data Handling | Manages large volumes of structured and unstructured data for AI models | Handles structured, transactional, and business data |

| Software Stack | Includes ML frameworks, data pipelines, and MLOps tools (e.g., model training and orchestration) | Uses traditional enterprise software such as ERP, CRM, and databases |

| Scalability | Highly flexible, scales dynamically based on data and model complexity | Typically scales incrementally based on system demand |

| Use Case Focus | Supports AI, machine learning, and advanced analytics applications | Supports business processes, communication tools, and IT services |

6. Why AI Infrastructure Matters

AI infrastructure is more than a technical setup. It is a strategic resource that allows organizations and governments to scale AI efficiently, secure their data, and remain competitive in an AI-driven world

6.1. For businesses and AI developers:

AI infrastructure is essential for both businesses and AI developers, providing the foundation to build, deploy, and scale AI systems effectively. For organizations, it enables AI adoption at scale and improves operational efficiency. For AI developers including data scientists, AI engineers, AI researchers, and application developers, it removes infrastructure barriers and simplifies the process of developing and deploying models.

Key benefits include:

- Accelerate development and shorten time-to-market for AI applications

- Support end-to-end workflows from experimentation to production

- Scale workloads efficiently as data and usage grow

- Optimize costs with flexible, on-demand resources

- Maintain stable performance across environments

- Enable continuous model improvement through MLOps and monitoring

This aligns with FPT AI Factory’s positioning as an AI developer cloud, serving both individual developers and AI teams in enterprises and research organizations.

6.2. For governments and regions:

For governments and regions, AI infrastructure is essential for building sovereign AI capabilities and ensuring long-term technological independence.

- Strengthen data sovereignty and maintain control over AI systems

- Reduce reliance on external platforms and foreign infrastructure

- Ensure compliance with local regulations and data governance standards

- Build national AI capabilities and accelerate digital transformation

- Foster innovation ecosystems and develop local AI talent

- Enhance economic competitiveness through scalable AI adoption

Building a reliable AI infrastructure is essential for organizations looking to scale AI effectively. With FPT AI Factory, users can access a full ecosystem of tools for training, deployment, and model operations in one platform. Individual users, including freelancers and professionals, can create an account and join the $100 Starter Plan to explore AI Notebook, GPU services, and Serverless Inference, while businesses and organizations with more advanced infrastructure or deployment needs can contact the FPT AI Factory team for tailored support.

Contact information:

- Hotline: 1900 638 399

- Email: support@fptcloud.com

Explore related articles

What Is GPU Computing and How Does It Work? A Complete Guide

What is AI inference? How it works, types, and use cases